Hackers linked to the Chinese government have broken into a handful of U.S. internet-service providers in 2024 in pursuit of sensitive information…The hacking campaign, called Salt Typhoon by investigators, hasn’t previously been publicly disclosed and is the latest in a series of incursions that U.S. investigators have linked to China in recent years. The intrusion is a sign of the stealthy success Beijing’s massive digital army of cyberspies has had breaking into valuable computer networks in the U.S. and around the globe.

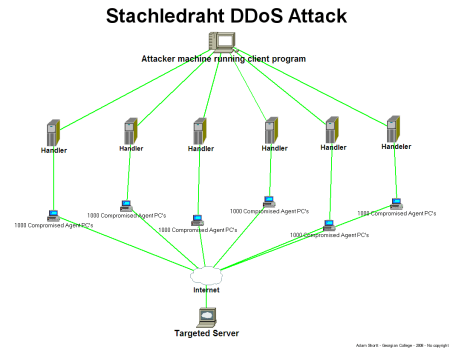

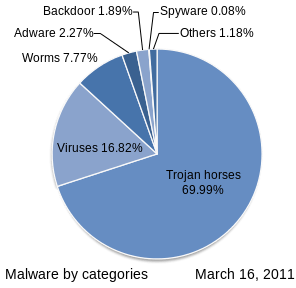

In Salt Typhoon, the actors linked to China burrowed into America’s broadband networks. In this type of intrusion, bad actors aim to establish a foothold within the infrastructure of cable and broadband providers that would allow them to access data stored by telecommunications companies or launch a damaging cyberattack…Investigators are exploring whether the intruders gained access to Cisco Systems routers, core network components that route much of the traffic on the internet, according to people familiar with the matter. Microsoft is investigating the intrusion and what sensitive information may have been accessed, people familiar with the matter said.

China has made a practice of gaining access to internet-service providers around the world. But if hackers gained access to service providers’ core routers, it would leave them in a powerful position to steal information, redirect internet traffic, install malicious software or pivot to new attacks.

In September 2024, U.S. officials said they had disrupted a network of more than 200,000 routers, cameras and other internet-connected consumer devices that served as an entry point into U.S. networks for a China-based hacking group called Flax Typhoon. And in January 2024, federal officials disrupted Volt Typhoon, yet another China-linked campaign that has sought to quietly infiltrate a swath of U.S. critical infrastructure. “The cyber threat posed by the Chinese government is massive,” said Christopher Wray, the Federal Bureau of Investigation’s director, speaking earlier this year at a security conference in Germany. “China’s hacking program is larger than that of every other major nation, combined.”

U.S. security officials allege that Beijing has tried and at times succeeded in burrowing deep into U.S. critical infrastructure networks ranging from water-treatment systems to airports and oil and gas pipelines. Top Biden administration officials have issued public warnings over the past year that China’s actions could threaten American lives and are intended to cause societal panic. The hackers could also disrupt the U.S.’s ability to mobilize support for Taiwan in the event that Chinese leader Xi Jinping orders his military to invade the island….

Excerpts from Sarah Krouse et al., China-Linked Hackers Breach U.S. Internet Providers in New ‘Salt Typhoon’ Cyberattack, WSJ, Sept. 26, 2024