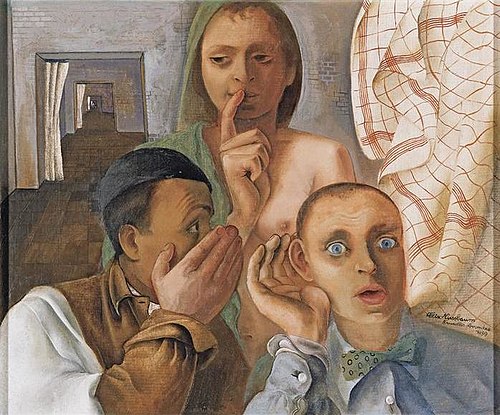

Training artificial-intelligence models demands massive amounts of fresh data. Mercor, a $10 billion startup, whose clients have included OpenAI, Anthropic and Meta, has been hit with at least seven class-action lawsuits following a third-party data breach. Allegedly, it exposed Mercor contractor information ranging from recorded job interviews to facial biometric data and screenshots of workers’ computers. A class-action suit filed on April 21, 2026 in Northern California (Ananthula versus Meror.io) alleged that Mercor accumulated applicant-vetting data, including background checks, which it shared with partners, in breach of federal regulations.

According to plaintiffs, the company’s practices include monitoring its contractors’ computers and sharing that data with clients, using recorded candidate interviews to train AI models, and training client models on materials potentially owned by other companies…Previously, The Wall Street Journal reported that Mercor sought to buy prior work materials from people on LinkedIn: Those people said they didn’t own the rights to such work. Mercor has been offering to pay $100 each for contractors’ personal-finance documents, such as spreadsheets and PowerPoint presentations, according to postings online. The company has offered $100 for people’s Google Maps histories… As workers’ screenshots are alleged to be included in the breached data, contractors are suing Mercor not only for exposing their own personal information but also the information of their other employers…

Mercor hired 30,000 contractors in 2025. Its competitors include Handshake AI, Micro1 and Surge. Recently, LinkedIn started testing its own AI training marketplace. The testing was earlier reported by Business Insider. Handshake co-founder Garrett Lord recently posted to LinkedIn that his company was looking to purchase codebases, internal databases and more. “We anonymize everything,” he wrote. “The stuff that’s not on the internet is what we need.” …The way [AI companies use contractors] make responsibility for data provenance more ambiguous….“There’s an incentive right now to figure out the rules and regulations after, and to capture as much of the market in the short term first.”

Thitipun Srinarmwong, a plaintiff in the class-action suit filed on April 21, 2016, alleged that project managers and reviewers at Mercor encouraged workers to use real data from their firms, so long as the source was redacted or slightly changed. When Srinarmwong wrote in a way so as to protect confidential information, Mercor reviewers criticized the work as too short and vague, the suit said. David Bevvino-Berv, a Mercor contractor who previously worked at Goldman Sachs, alleges in the same suit that he saw financial models and prompts that he suspected came from workers sharing proprietary information from other companies…Bevvino-Berv, the plaintiff who worked at Goldman Sachs, alleged that the Insightful software he was required to use as an employee of Mercor captured usage of his bank account, health-insurance portals and around 240 other applications. The suit also alleged that Bevvino-Berv wasn’t “clearly informed” that Insightful would capture anything beyond his Mercor-related work.

Excerpts from Katherine Bindley, Workers Sue $10 Billion AI Startup for Collecting and Exposing Personal Data, WSJ, Apr. 22, 2026