The contest between China and America, the world’s two superpowers, has many dimensions… One of the most alarming and least understood is the race towards artificial-intelligence-enabled warfare. Both countries are investing large sums in militarised artificial intelligence (AI), from autonomous robots to software that gives generals rapid tactical advice in the heat of battle….As Jack Shanahan, a general who is the Pentagon’s point man for AI, put it last month, “What I don’t want to see is a future where our potential adversaries have a fully ai-enabled force and we do not.”

AI-enabled weapons may offer superhuman speed and precision. In order to gain a military advantage, the temptation for armies will be to allow them not only to recommend decisions but also to give orders. That could have worrying consequences. Able to think faster than humans, an AI-enabled command system might cue up missile strikes on aircraft carriers and airbases at a pace that leaves no time for diplomacy and in ways that are not fully understood by its operators. On top of that, ai systems can be hacked, and tricked with manipulated data.

AI in war might aid surprise attacks or confound them, and the death toll could range from none to millions. Unlike missile silos, software cannot be spied on from satellites. And whereas warheads can be inspected by enemies without reducing their potency, showing the outside world an algorithm could compromise its effectiveness. The incentive may be for both sides to mislead the other. “Adversaries’ ignorance of AI-developed configurations will become a strategic advantage,” suggests Henry Kissinger, who led America’s cold-war arms-control efforts with the Soviet Union…Amid a confrontation between the world’s two big powers, the temptation will be to cut corners for temporary advantage.

Excerpts from Mind control: Artificial intelligence and war, Economist, Sept. 7, 2019

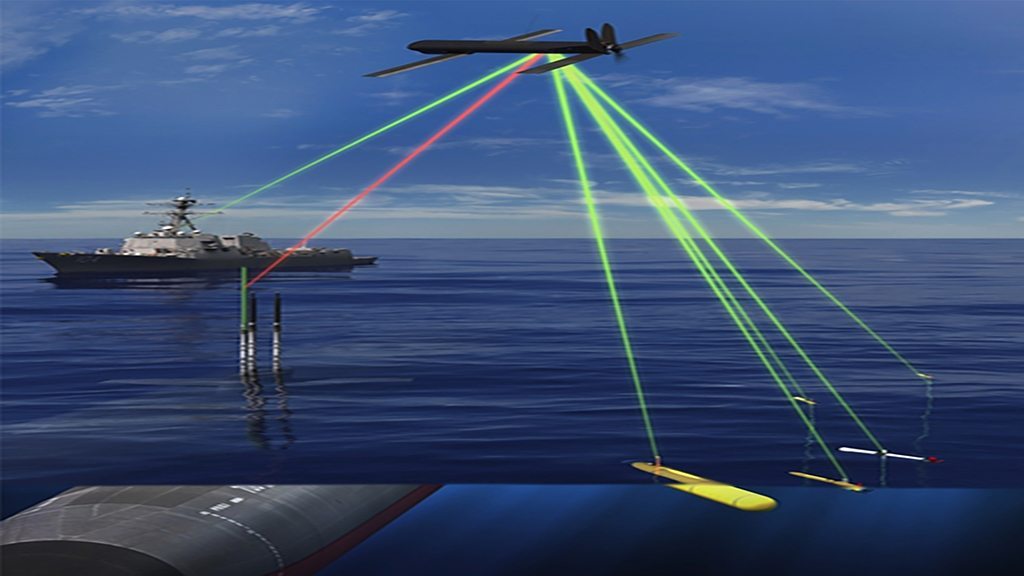

Example of the Use of AI in Warfare: The Real-time Adversarial Intelligence and Decision-making (RAID) program under the auspices of The Defense Advanced Research Projects Agency’s (DARPA) Information Exploitation Office (IXO) focuses on the challenge of anticipating enemy actions in a military operation. In the US Air Force community, the term, predictive battlespace awareness, refers to capabilities that would help the commander and staff to characterize and predict likely enemy courses of action…Today’s practices of military intelligence and decision-making do include a number of processes specifically aimed at predicting enemy actions. Currently, these processes are largely manual as well as mental, and do not involve any significant use of technical means. Even when computerized wargaming is used (albeit rarely in field conditions), it relies either on human guidance of the simulated enemy units or on simple reactive behaviors of such simulated units; in neither case is there a computerized prediction of intelligent and forward-looking enemy actions….

[The deception reasoning of the adversary is very important in this context.] Deception reasoning refers to an important aspect of predicting enemy actions: the fact that military operations are historically, crucially dependent on the ability to use various forms of concealment and deception for friendly purposes while detecting and counteracting the enemy’s concealment and deception. Therefore, adversarial reasoning must include deception reasoning.

The RAID Program will develop a real-time adversarial predictive analysis tool that operates as an automated enemy predictor providing a continuously updated picture of probable enemy actions in tactical ground operations. The RAID Program will strive to: prove that adversarial reasoning can be automated; prove that automated adversarial reasoning can include deception….

Excerpts from Real-time Adversarial Intelligence and Decision-making (RAID), US Federal Grants