About 3 billion people live within 100 miles (160km) of the sea, a number that could double in the next decade as humans flock to coastal cities like gulls. The oceans produce $3 trillion of goods and services each year and untold value for the Earth’s ecology. Life could not exist without these vast water reserves—and, if anything, they are becoming even more important to humans than before.

Mining is about to begin under the seabed in the high seas—the regions outside the exclusive economic zones administered by coastal and island nations, which stretch 200 nautical miles (370km) offshore. Nineteen exploratory licences have been issued. New summer shipping lanes are opening across the Arctic Ocean. The genetic resources of marine life promise a pharmaceutical bonanza: the number of patents has been rising at 12% a year. One study found that genetic material from the seas is a hundred times more likely to have anti-cancer properties than that from terrestrial life.

But these developments are minor compared with vaster forces reshaping the Earth, both on land and at sea. It has long been clear that people are damaging the oceans—witness the melting of the Arctic ice in summer, the spread of oxygen-starved dead zones and the death of coral reefs. Now, the consequences of that damage are starting to be felt onshore…

More serious is the global mismanagement of fish stocks. About 3 billion people get a fifth of their protein from fish, making it a more important protein source than beef. But a vicious cycle has developed as fish stocks decline and fishermen race to grab what they can of the remainder. According to the Food and Agriculture Organisation (FAO), a third of fish stocks in the oceans are over-exploited; some estimates say the proportion is more than half. One study suggested that stocks of big predatory species—such as tuna, swordfish and marlin—may have fallen by as much as 90% since the 1950s. People could be eating much better, were fishing stocks properly managed.

The forests are often called the lungs of the Earth, but the description better fits the oceans. They produce half the world’s supply of oxygen, mostly through photosynthesis by aquatic algae and other organisms. But according to a forthcoming report by the Intergovernmental Panel on Climate Change (IPCC; the group of scientists who advise governments on global warming), concentrations of chlorophyll (which helps makes oxygen) have fallen by 9-12% in 1998-2010 in the North Pacific, Indian and North Atlantic Oceans.

Climate change may be the reason. At the moment, the oceans are moderating the impact of global warming—though that may not last.,,Changes in the oceans, therefore, may mean less oxygen will be produced. This cannot be good news, though scientists are still debating the likely consequences. The world is not about to suffocate. But the result could be lower oxygen concentrations in the oceans and changes to the climate because the counterpart of less oxygen is more carbon—adding to the build-up of greenhouse gases. In short, the decades of damage wreaked on the oceans are now damaging the terrestrial environment.

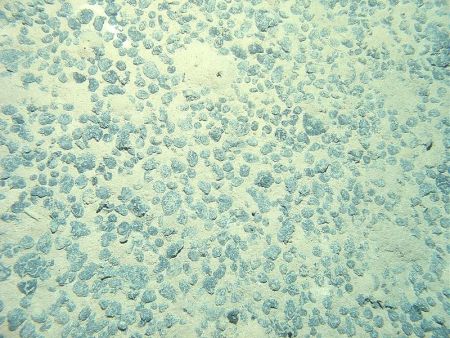

Three-quarters of the fish stocks in European waters are over-exploited and some are close to collapse… Farmers dump excess fertiliser into rivers, which finds its way to the sea; there cyanobacteria (blue-green algae) feed on the nutrients, proliferate madly and reduce oxygen levels, asphyxiating all sea creatures. In 2008, there were over 400 “dead zones” in the oceans. Polluters pump out carbon dioxide, which dissolves in seawater, producing carbonic acid. That in turn has increased ocean acidity by over a quarter since the start of the Industrial Revolution. In 2012, scientists found pteropods (a kind of sea snail) in the Southern Ocean with partially dissolved shells…

The high seas are not ungoverned. Almost every country has ratified the UN Convention on the Law of the Sea (UNCLOS), which, in the words of Tommy Koh, president of UNCLOS in the 1980s, is “a constitution for the oceans”. It sets rules for everything from military activities and territorial disputes (like those in the South China Sea) to shipping, deep-sea mining and fishing. Although it came into force only in 1994, it embodies centuries-old customary laws, including the freedom of the seas, which says the high seas are open to all. UNCLOS took decades to negotiate and is sacrosanct. Even America, which refuses to sign it, abides by its provisions.

But UNCLOS has significant faults. It is weak on conservation and the environment, since most of it was negotiated in the 1970s when these topics were barely considered. It has no powers to enforce or punish. America’s refusal to sign makes the problem worse: although it behaves in accordance with UNCLOS, it is reluctant to push others to do likewise.

Specialised bodies have been set up to oversee a few parts of the treaty, such as the International Seabed Authority, which regulates mining beneath the high seas. But for the most part UNCLOS relies on member countries and existing organisations for monitoring and enforcement. The result is a baffling tangle of overlapping authorities that is described by the Global Ocean Commission, a new high-level lobby group, as a “co-ordinated catastrophe”.

Individually, some of the institutions work well enough. The International Maritime Organisation, which regulates global shipping, keeps a register of merchant and passenger vessels, which must carry identification numbers. The result is a reasonably law-abiding global industry. It is also responsible for one of the rare success stories of recent decades, the standards applying to routine and accidental discharges of pollution from ships. But even it is flawed. The Institute for Advanced Sustainability Studies, a German think-tank, rates it as the least transparent international organisation. And it is dominated by insiders: contributions, and therefore influence, are weighted by tonnage.

Other institutions look good on paper but are untested. This is the case with the seabed authority, which has drawn up a global regime for deep-sea mining that is more up-to-date than most national mining codes… The problem here is political rather than regulatory: how should mining revenues be distributed? Deep-sea minerals are supposed to be “the common heritage of mankind”. Does that mean everyone is entitled to a part? And how to share it out?

The biggest failure, though, is in the regulation of fishing. Overfishing does more damage to the oceans than all other human activities there put together. In theory, high-seas fishing is overseen by an array of regional bodies. Some cover individual species, such as the International Commission for the Conservation of Atlantic Tunas (ICCAT, also known as the International Conspiracy to Catch All Tuna). Others cover fishing in a particular area, such as the north-east Atlantic or the South Pacific Oceans. They decide what sort of fishing gear may be used, set limits on the quantity of fish that can be caught and how many ships are allowed in an area, and so on.

Here, too, there have been successes. Stocks of north-east Arctic cod are now the highest of any cod species and the highest they have been since 1945—even though the permitted catch is also at record levels. This proves it is possible to have healthy stocks and a healthy fishing industry. But it is a bilateral, not an international, achievement: only Norway and Russia capture these fish and they jointly follow scientists’ advice about how much to take. There has also been some progress in controlling the sort of fishing gear that does the most damage. In 1991 the UN banned drift nets longer than 2.5km (these are nets that hang down from the surface; some were 50km long). A series of national and regional restrictions in the 2000s placed limits on “bottom trawling” (hoovering up everything on the seabed)—which most people at the time thought unachievable.

But the overall record is disastrous. Two-thirds of fish stocks on the high seas are over-exploited—twice as much as in parts of oceans under national jurisdiction. Illegal and unreported fishing is worth $10 billion-24 billion a year—about a quarter of the total catch. According to the World Bank, the mismanagement of fisheries costs $50 billion or more a year, meaning that the fishing industry would reap at least that much in efficiency gains if it were properly managed.

Most regional fishery bodies have too little money to combat illegal fishermen. They do not know how many vessels are in their waters because there is no global register of fishing boats. Their rules only bind their members; outsiders can break them with impunity. An expert review of ICCAT, the tuna commission, ordered by the organisation itself concluded that it was “an international disgrace”. A survey by the FAO found that over half the countries reporting on surveillance and enforcement on the high seas said they could not control vessels sailing under their flags. Even if they wanted to, then, it is not clear that regional fishery bodies or individual countries could make much difference.

But it is far from clear that many really want to. Almost all are dominated by fishing interests. The exceptions are the organisation for Antarctica, where scientific researchers are influential, and the International Whaling Commission, which admitted environmentalists early on. Not by coincidence, these are the two that have taken conservation most seriously.

Countries could do more to stop vessels suspected of illegal fishing from docking in their harbours—but they don’t. The FAO’s attempt to set up a voluntary register of high-seas fishing boats has been becalmed for years. The UN has a fish-stocks agreement that imposes stricter demands than regional fishery bodies. It requires signatories to impose tough sanctions on ships that break the rules. But only 80 countries have ratified it, compared with the 165 parties to UNCLOS. One study found that 28 nations, which together account for 40% of the world’s catch, are failing to meet most of the requirements of an FAO code of conduct which they have signed up to.

It is not merely that particular institutions are weak. The system itself is dysfunctional. There are organisations for fishing, mining and shipping, but none for the oceans as a whole. Regional seas organisations, whose main responsibility is to cut pollution, generally do not cover the same areas as regional fishery bodies, and the two rarely work well together. (In the north-east Atlantic, the one case where the boundaries coincide, they have done a lot.) Dozens of organisations play some role in the oceans (including 16 in the UN alone) but the outfit that is supposed to co-ordinate them, called UN-Oceans, is an ad-hoc body without oversight authority. There are no proper arrangements for monitoring, assessing or reporting on how the various organisations are doing—and no one to tell them if they are failing.

Governing the high seas: In deep water, Economist, Feb. 22, 2014, at 51

The

The